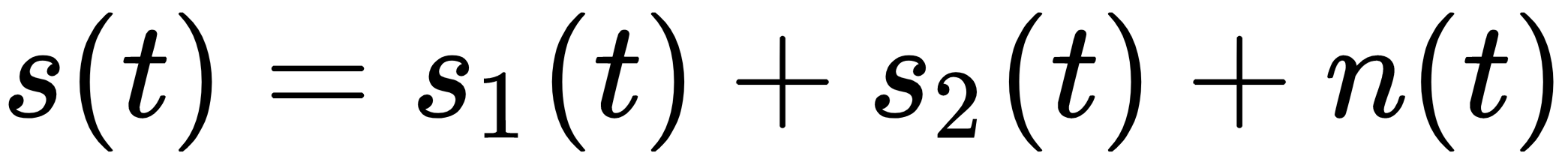

Sometimes, it's useful to process the data in order to extract components that are uncorrelated and independent. To better understand this scenario, let's suppose that we record two people while they sing different songs. The result is clearly very noisy, but we know that the stochastic signal could be decomposed into the following:

The first two terms are the single music sources (modeled as stochastic processes), while n(t) is additive Gaussian noise. Our goal is to find s1(t) + n1(t) and s2(t) + n1(t) in order to remove one of the two sources (with a part of the additive noise that cannot be filtered out). Performing this task using a standard PCA is very difficult because there are no constraints on the independence of the components. This problem has been widely studied by Hyvärinen and Oja (please refer to Independent Component...